A Comparative Study of RGB and Multispectral Sensor-Based Cotton Canopy Cover Modelling Using Multi-Temporal UAS Data

Abstract

:1. Introduction

2. Materials and Methods

2.1. Study Area and Sensors

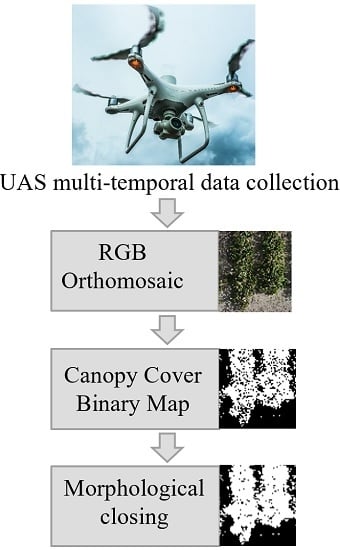

2.2. Data Collection and Preprocessing

2.3. Canopy Cover Computation

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Adhikari, P.; Gowda, P.; Marek, G.; Brauer, D.; Kisekka, I.; Northup, B.; Rocateli, A. Calibration and validation of csm-cropgro-cotton model using lysimeter data in the texas high plains. J. Contemp. Water Res. Educ. 2017, 162, 61–78. [Google Scholar] [CrossRef]

- Phillips, R.L. Mobilizing science to break yield barriers. Crop Sci. 2010, 50, 99–108. [Google Scholar] [CrossRef]

- Xu, R.; Li, C.; Paterson, A.H. Multispectral imaging and unmanned aerial systems for cotton plant phenotyping. PLoS ONE 2019, 14, e0205083. [Google Scholar] [CrossRef] [PubMed]

- Pierpaoli, E.; Carli, G.; Pignatti, E.; Canavari, M. Drivers of precision agriculture technologies adoption: A literature review. Procedia Technol. 2013, 8, 61–69. [Google Scholar] [CrossRef]

- Tokekar, P.; Vander Hook, J.; Mulla, D.; Isler, V. Sensor planning for a symbiotic uav and ugv system for precision agriculture. IEEE Trans. Robot. 2016, 32, 1498–1511. [Google Scholar] [CrossRef]

- Singh, K.K.; Frazier, A.E. A meta-analysis and review of unmanned aircraft system (uas) imagery for terrestrial applications. Int. J. Remote Sens. 2018, 39, 5078–5098. [Google Scholar] [CrossRef]

- Roth, L.; Streit, B. Predicting cover crop biomass by lightweight uas-based rgb and nir photography: An applied photogrammetric approach. Precis. Agric. 2018, 19, 93–114. [Google Scholar] [CrossRef]

- Gevaert, C.M.; Suomalainen, J.; Tang, J.; Kooistra, L. Generation of spectral–temporal response surfaces by combining multispectral satellite and hyperspectral uav imagery for precision agriculture applications. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 3140–3146. [Google Scholar] [CrossRef]

- Trout, T.J.; Johnson, L.F.; Gartung, J. Remote sensing of canopy cover in horticultural crops. HortScience 2008, 43, 333–337. [Google Scholar] [CrossRef]

- Nielsen, D.C.; Miceli-Garcia, J.J.; Lyon, D.J. Canopy cover and leaf area index relationships for wheat, triticale, and corn. Agron. J. 2012, 104, 1569–1573. [Google Scholar] [CrossRef]

- Chopping, M. Canapi: Canopy analysis with panchromatic imagery. Remote Sens. Lett. 2011, 2, 21–29. [Google Scholar] [CrossRef]

- Halperin, J.; LeMay, V.; Chidumayo, E.; Verchot, L.; Marshall, P. Model-based estimation of above-ground biomass in the miombo ecoregion of zambia. For. Ecosyst. 2016, 3, 14. [Google Scholar] [CrossRef]

- Hansen, M.C.; DeFries, R.S.; Townshend, J.R.; Carroll, M.; DiMiceli, C.; Sohlberg, R.A. Global percent tree cover at a spatial resolution of 500 meters: First results of the modis vegetation continuous fields algorithm. Earth Interact. 2003, 7, 1–15. [Google Scholar] [CrossRef]

- Korhonen, L.; Hovi, A.; Rönnholm, P.; Rautiainen, M. The accuracy of large-area forest canopy cover estimation using landsat in boreal region. Int. J. Appl. Earth Obs. Geoinf. 2016, 53, 118–127. [Google Scholar]

- Chemura, A.; Mutanga, O.; Odindi, J. Empirical modeling of leaf chlorophyll content in coffee (coffea arabica) plantations with sentinel-2 msi data: Effects of spectral settings, spatial resolution, and crop canopy cover. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 5541–5550. [Google Scholar] [CrossRef]

- Melin, M.; Korhonen, L.; Kukkonen, M.; Packalen, P. Assessing the performance of aerial image point cloud and spectral metrics in predicting boreal forest canopy cover. ISPRS J. Photogramm. Remote Sens. 2017, 129, 77–85. [Google Scholar] [CrossRef]

- Korhonen, L.; Ali-Sisto, D.; Tokola, T. Tropical forest canopy cover estimation using satellite imagery and airborne lidar reference data. Silva Fenn. 2015, 49, 1–18. [Google Scholar] [CrossRef]

- Li, W.; Niu, Z.; Huang, N.; Wang, C.; Gao, S.; Wu, C. Airborne lidar technique for estimating biomass components of maize: A case study in zhangye city, northwest china. Ecol. Indic. 2015, 57, 486–496. [Google Scholar] [CrossRef]

- Tattaris, M.; Reynolds, M.P.; Chapman, S.C. A direct comparison of remote sensing approaches for high-throughput phenotyping in plant breeding. Front. Plant Sci. 2016, 7, 1131. [Google Scholar] [CrossRef]

- Chen, A.; Orlov-Levin, V.; Elharar, O.; Meron, M. Comparing satellite and high-resolution visible and thermal aerial imaging of field crops for precision irrigation management and plant biomass forecast. In Precision Agriculture’19; Wageningen Academic Publishers: Wageningen, The Netherlands, 2019; pp. 37–44. [Google Scholar]

- Korhonen, L.; Korpela, I.; Heiskanen, J.; Maltamo, M. Airborne discrete-return lidar data in the estimation of vertical canopy cover, angular canopy closure and leaf area index. Remote Sens. Environ. 2011, 115, 1065–1080. [Google Scholar] [CrossRef]

- Anderson, K.E.; Glenn, N.F.; Spaete, L.P.; Shinneman, D.J.; Pilliod, D.S.; Arkle, R.S.; McIlroy, S.K.; Derryberry, D.R. Estimating vegetation biomass and cover across large plots in shrub and grass dominated drylands using terrestrial lidar and machine learning. Ecol. Indic. 2018, 84, 793–802. [Google Scholar] [CrossRef]

- Ma, Q.; Su, Y.; Guo, Q. Comparison of canopy cover estimations from airborne lidar, aerial imagery, and satellite imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 4225–4236. [Google Scholar] [CrossRef]

- Holman, F.; Riche, A.; Michalski, A.; Castle, M.; Wooster, M.; Hawkesford, M. High throughput field phenotyping of wheat plant height and growth rate in field plot trials using uav based remote sensing. Remote Sens. 2016, 8, 1031. [Google Scholar] [CrossRef]

- Chianucci, F.; Disperati, L.; Guzzi, D.; Bianchini, D.; Nardino, V.; Lastri, C.; Rindinella, A.; Corona, P. Estimation of canopy attributes in beech forests using true colour digital images from a small fixed-wing uav. Int. J. Appl. Earth Obs. Geoinf. 2016, 47, 60–68. [Google Scholar] [CrossRef]

- Fernandez-Gallego, J.A.; Kefauver, S.C.; Kerfal, S.; Araus, J.L. Remote Sensing for Agriculture, Ecosystems, and Hydrology XX. In Comparative Canopy Cover Estimation Using RGB Images from UAV and Ground; International Society for Optics and Photonics: Berlin, Germany, 2018; p. 107830J. [Google Scholar]

- Ashapure, A.; Oh, S.; Marconi, T.G.; Chang, A.; Jung, J.; Landivar, J.; Enciso, J. Autonomous Air and Ground Sensing Systems for Agricultural Optimization and Phenotyping IV. In Unmanned Aerial System Based Tomato Yield Estimation Using Machine Learning; International Society for Optics and Photonics: Baltimore, MD, USA, 2019. [Google Scholar]

- Chu, T.; Chen, R.; Landivar, J.A.; Maeda, M.M.; Yang, C.; Starek, M.J. Cotton growth modeling and assessment using unmanned aircraft system visual-band imagery. J. Appl. Remote Sens. 2016, 10, 036018. [Google Scholar] [CrossRef]

- Ballesteros, R.; Ortega, J.; Hernández, D.; Moreno, M. Applications of georeferenced high-resolution images obtained with unmanned aerial vehicles. Part II: Application to maize and onion crops of a semi-arid region in spain. Precis. Agric. 2014, 15, 593–614. [Google Scholar] [CrossRef]

- Ballesteros, R.; Ortega, J.F.; Hernandez, D.; Moreno, M.A. Onion biomass monitoring using uav-based rgb imaging. Precis. Agric. 2018, 19, 840–857. [Google Scholar] [CrossRef]

- Ashapure, A.; Jung, J.; Yeom, J.; Chang, A.; Maeda, M.; Maeda, A.; Landivar, J. A novel framework to detect conventional tillage and no-tillage cropping system effect on cotton growth and development using multi-temporal uas data. ISPRS J. Photogramm. Remote Sens. 2019, 152, 49–64. [Google Scholar] [CrossRef]

- Makanza, R.; Zaman-Allah, M.; Cairns, J.; Magorokosho, C.; Tarekegne, A.; Olsen, M.; Prasanna, B. High-throughput phenotyping of canopy cover and senescence in maize field trials using aerial digital canopy imaging. Remote Sens. 2018, 10, 330. [Google Scholar] [CrossRef]

- Clevers, J.; Kooistra, L.; Van Den Brande, M. Using sentinel-2 data for retrieving lai and leaf and canopy chlorophyll content of a potato crop. Remote Sens. 2017, 9, 405. [Google Scholar] [CrossRef]

- Pauly, K. Applying conventional vegetation vigor indices to uas-derived orthomosaics: Issues and considerations. In Proceedings of the International Conference of Precision Agriculture (ICPA), Sacramento, CA, USA, 20–23 July 2014. [Google Scholar]

- Booth, D.T.; Cox, S.E.; Berryman, R.D. Point sampling digital imagery with ‘samplepoint’. Environ. Monit. Assess. 2006, 123, 97–108. [Google Scholar] [CrossRef] [PubMed]

- Richardson, M.; Karcher, D.; Purcell, L. Quantifying turfgrass cover using digital image analysis. Crop Sci. 2001, 41, 1884–1888. [Google Scholar] [CrossRef]

- Lee, K.J.; Lee, B.W. Estimating canopy cover from color digital camera image of rice field. J. Crop Sci. Biotechnol. 2011, 14, 151–155. [Google Scholar] [CrossRef]

- Patrignani, A.; Ochsner, T.E. Canopeo: A powerful new tool for measuring fractional green canopy cover. Agron. J. 2015, 107, 2312–2320. [Google Scholar] [CrossRef] [Green Version]

- Torres-Sánchez, J.; Peña, J.M.; de Castro, A.I.; López-Granados, F. Multi-temporal mapping of the vegetation fraction in early-season wheat fields using images from uav. Comput. Electron. Agric. 2014, 103, 104–113. [Google Scholar] [CrossRef]

- Fang, S.; Tang, W.; Peng, Y.; Gong, Y.; Dai, C.; Chai, R.; Liu, K. Remote estimation of vegetation fraction and flower fraction in oilseed rape with unmanned aerial vehicle data. Remote Sens. 2016, 8, 416. [Google Scholar] [CrossRef] [Green Version]

- Marcial-Pablo, M.D.J.; Gonzalez-Sanchez, A.; Jimenez-Jimenez, S.I.; Ontiveros-Capurata, R.E.; Ojeda-Bustamante, W. Estimation of vegetation fraction using rgb and multispectral images from uav. Int. J. Remote Sens. 2019, 40, 420–438. [Google Scholar] [CrossRef]

- Lima-Cueto, F.J.; Blanco-Sepúlveda, R.; Gómez-Moreno, M.L.; Galacho-Jiménez, F.B. Using vegetation indices and a uav imaging platform to quantify the density of vegetation ground cover in olive groves (Olea europaea L.) in southern spain. Remote Sens. 2019, 11, 2564. [Google Scholar] [CrossRef] [Green Version]

- Westoby, M.J.; Brasington, J.; Glasser, N.F.; Hambrey, M.J.; Reynolds, J.M. ‘Structure-from-motion’photogrammetry: A low-cost, effective tool for geoscience applications. Geomorphology 2012, 179, 300–314. [Google Scholar] [CrossRef] [Green Version]

- Rouse, J.W., Jr.; Haas, R.; Schell, J.; Deering, D. Monitoring Vegetation Systems in the Great Plains with ERTS; Texas A&M Univ.: College Station, TX, USA, 1974. [Google Scholar]

- Hulvey, K.B.; Thomas, K.; Thacker, E. A comparison of two herbaceous cover sampling methods to assess ecosystem services in high-shrub rangelands: Photography-based grid point intercept (gpi) versus quadrat sampling. Rangelands 2018, 40, 152–159. [Google Scholar] [CrossRef]

- Woebbecke, D.M.; Meyer, G.E.; Von Bargen, K.; Mortensen, D. Color indices for weed identification under various soil, residue, and lighting conditions. Trans. ASAE 1995, 38, 259–269. [Google Scholar] [CrossRef]

- Bendig, J.; Yu, K.; Aasen, H.; Bolten, A.; Bennertz, S.; Broscheit, J.; Gnyp, M.L.; Bareth, G. Combining uav-based plant height from crop surface models, visible, and near infrared vegetation indices for biomass monitoring in barley. Int. J. Appl. Earth Obs. Geoinf. 2015, 39, 79–87. [Google Scholar] [CrossRef]

- Dougherty, E.R. An Introduction to Morphological Image Processing; SPIE: Bellingham, WA, USA, 1992. [Google Scholar]

| SlantRange 3p Sensor Band | Peak Wavelength (nm) | FWHM (nm) |

|---|---|---|

| Green | 560 | 40 |

| Red | 655 | 35 |

| Red-edge | 710 | 20 |

| Near infrared | 830 | 110 |

| Date | Flight Altitude (m) | Overlap (%) | Spatial Resolution (cm) | |||

|---|---|---|---|---|---|---|

| RGB | Multispectral | RGB | Multispectral | RGB | Multispectral | |

| 24 April 2017 | 20 | 30 | 85 | 75 | 0.51 | 0.93 |

| 5 May 2017 | 20 | 25 | 85 | 70 | 0.50 | 0.85 |

| 12 May 2017 | 20 | 25 | 85 | 70 | 0.51 | 0.81 |

| 20 May 2017 | 20 | 25 | 85 | 70 | 0.52 | 0.82 |

| 30 May 2017 | 20 | 25 | 85 | 70 | 0.51 | 0.85 |

| 7 June 2017 | 20 | 25 | 85 | 70 | 0.51 | 0.83 |

| 19 June 2017 | 20 | 25 | 85 | 70 | 0.52 | 0.81 |

| 5 July 2017 | 20 | 25 | 85 | 70 | 0.51 | 0.81 |

| 10 July 2017 | 20 | 25 | 85 | 70 | 0.50 | 0.83 |

| 18 July 2017 | 20 | 25 | 85 | 70 | 0.51 | 0.82 |

| 23 July 2017 | 20 | 25 | 85 | 70 | 0.51 | 0.82 |

| 23 April 2018 | 35 | 47 | 80 | 70 | 0.73 | 1.61 |

| 7 May 2018 | 35 | 47 | 80 | 70 | 0.69 | 1.65 |

| 14 May 2018 | 35 | 47 | 80 | 70 | 0.71 | 1.61 |

| 23 May 2018 | 35 | 47 | 80 | 70 | 0.71 | 1.64 |

| 1 June 2018 | 37 | 47 | 80 | 70 | 0.73 | 1.62 |

| 6 June 2018 | 35 | 47 | 80 | 70 | 0.72 | 1.61 |

| 13 June 2018 | 35 | 47 | 80 | 70 | 0.71 | 1.63 |

| 3 July 2018 | 35 | 47 | 80 | 70 | 0.71 | 1.61 |

| 9 July 2018 | 35 | 47 | 80 | 70 | 0.72 | 1.63 |

| 19 July 2018 | 35 | 47 | 80 | 70 | 0.70 | 1.62 |

| Vegetation Index | Formula | Reference |

|---|---|---|

| Canopeo | , | [38] |

| ExG | 2 , , | [46] |

| MGRVI | [47] | |

| RGBVI | [47] |

| VI | Threshold | Range |

|---|---|---|

| NDVI | 0.6 | 0 to 1 |

| ExG | 0.2 | −2 to 2 |

| MGRVI | 0.15 | −1 to 1 |

| RGBVI | 0.15 | −1 to 1 |

| RGB-Based Method | Average RMSE with Respect to NDVI-Based CC Estimation (%) | |||

|---|---|---|---|---|

| 2017 Experiment | 2018 Experiment | |||

| Before MC | After MC | Before MC | After MC | |

| Canopeo | 17.87 | 13.34 | 15.56 | 9.73 |

| ExG | 16.97 | 13.00 | 15.51 | 8.67 |

| MGRVI | 13.11 | 10.35 | 14.34 | 6.95 |

| RGBVI | 7.44 | 2.94 | 8.85 | 2.82 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ashapure, A.; Jung, J.; Chang, A.; Oh, S.; Maeda, M.; Landivar, J. A Comparative Study of RGB and Multispectral Sensor-Based Cotton Canopy Cover Modelling Using Multi-Temporal UAS Data. Remote Sens. 2019, 11, 2757. https://doi.org/10.3390/rs11232757

Ashapure A, Jung J, Chang A, Oh S, Maeda M, Landivar J. A Comparative Study of RGB and Multispectral Sensor-Based Cotton Canopy Cover Modelling Using Multi-Temporal UAS Data. Remote Sensing. 2019; 11(23):2757. https://doi.org/10.3390/rs11232757

Chicago/Turabian StyleAshapure, Akash, Jinha Jung, Anjin Chang, Sungchan Oh, Murilo Maeda, and Juan Landivar. 2019. "A Comparative Study of RGB and Multispectral Sensor-Based Cotton Canopy Cover Modelling Using Multi-Temporal UAS Data" Remote Sensing 11, no. 23: 2757. https://doi.org/10.3390/rs11232757

APA StyleAshapure, A., Jung, J., Chang, A., Oh, S., Maeda, M., & Landivar, J. (2019). A Comparative Study of RGB and Multispectral Sensor-Based Cotton Canopy Cover Modelling Using Multi-Temporal UAS Data. Remote Sensing, 11(23), 2757. https://doi.org/10.3390/rs11232757